Overview

MCPFarm.ai is the infrastructure layer for agentic AI ecosystems. It takes isolated MCP (Model Context Protocol) servers — each providing specialized tools — and multiplexes them through a single gateway with authentication, rate limiting, observability, and a real-time dashboard. Think of it as the central nervous system that all AI agents connect through for governed tool access.

Instead of each agent maintaining its own tool integrations (fragile, ungovernable, impossible to audit), MCPFarm provides a centralized farm where tools are registered, discovered, monitored, and controlled. Agents connect once via the SDK or REST API, and get access to the entire tool catalog — with every invocation logged, metered, and traceable.

MCP Server Farm

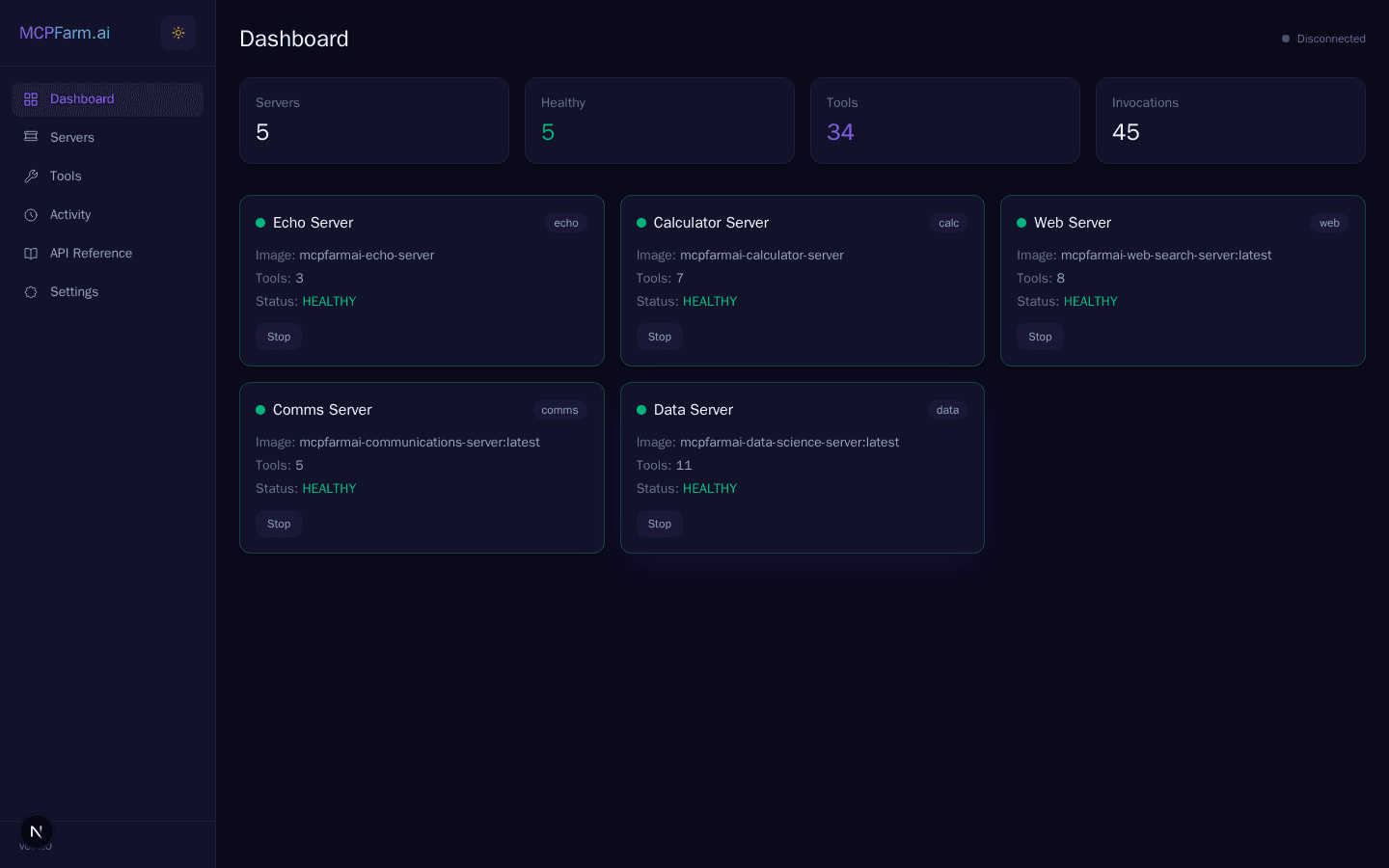

Five specialized servers auto-discovered via Docker labels, each namespaced to prevent tool collisions

Echo Server

Testing & validation

Calculator

Mathematical computation

Web Search

Tavily search, news, crawl, extract

Data Science

NumPy, Pandas, statistics, sampling

Communications

Gmail send/read/search + WhatsApp

Real-Time Dashboard

Full visibility into server health, tool registry, invocations, and API management

Dashboard — System health, server status, and tool activity at a glance

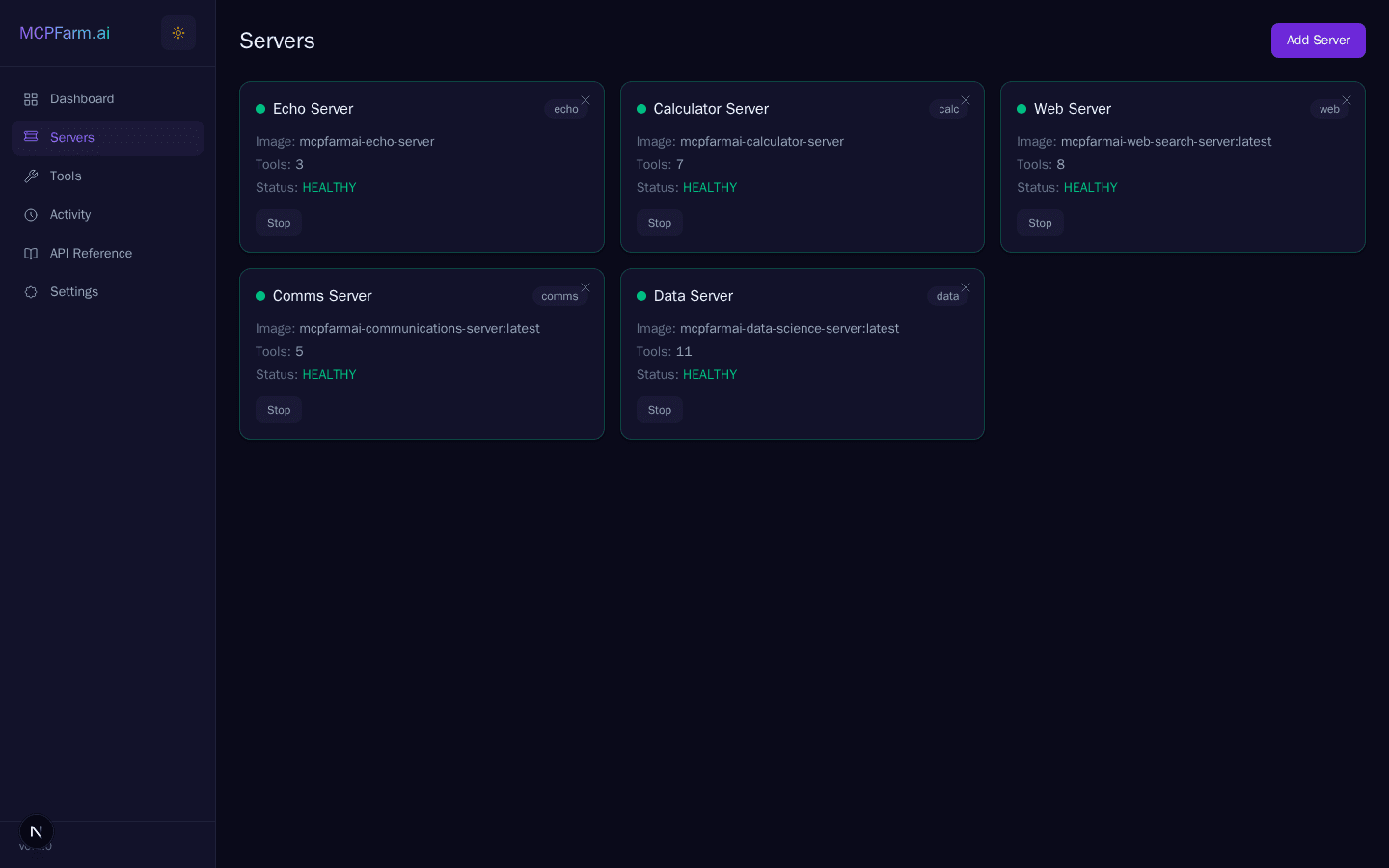

Server Management — Docker-discovered MCP servers with health status

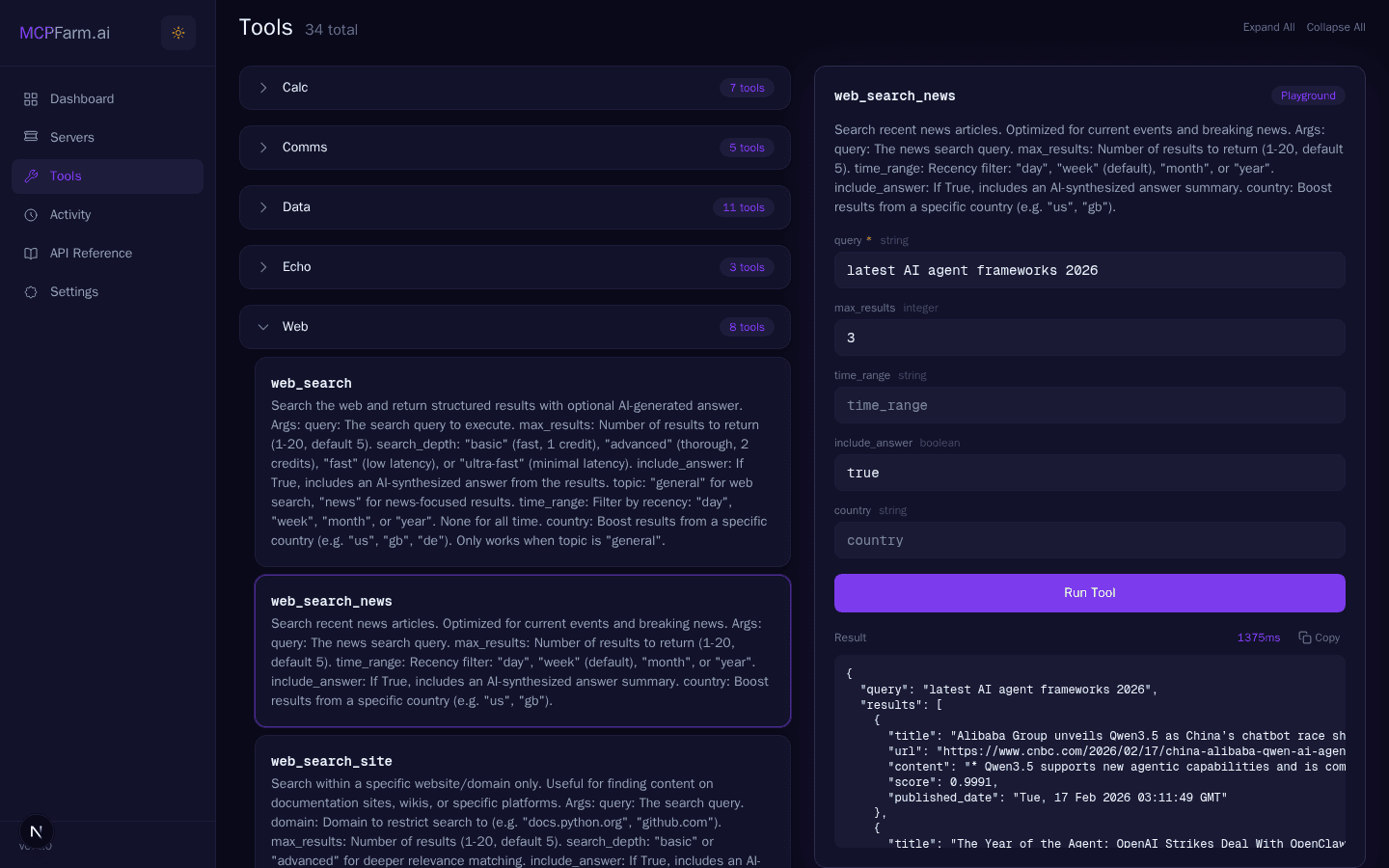

Tool Playground — Test any tool interactively with live JSON results

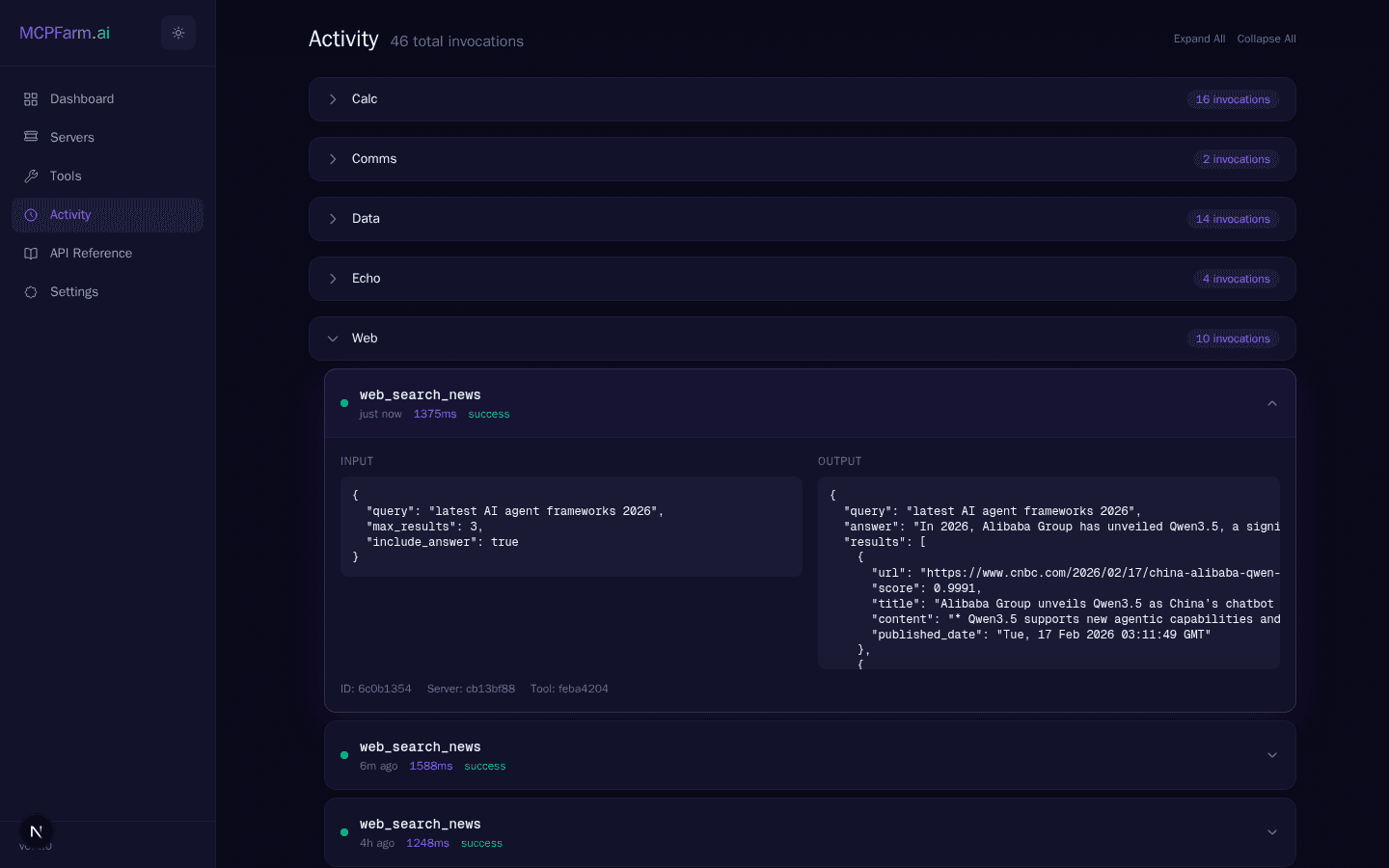

Activity Monitor — Real-time WebSocket feed of every tool invocation

Key Features

Dynamic Server Management

Add, remove, and restart MCP servers without gateway downtime. Docker label-based auto-discovery.

Unified Tool Registry

34 tools across 5 servers, namespaced to avoid collisions. Redis-cached for sub-millisecond lookups.

Real-Time Activity Monitor

WebSocket-powered live dashboard showing every tool invocation, latency, and error in real time.

API Key Authentication

Scoped API keys with rate limiting, expiration, and audit logging. Admin vs. user key separation.

Python SDK + LangChain

First-class SDK with LangChain tool adapters for LangGraph and CrewAI agent integration.

Prometheus + Grafana

Pre-built observability stack — HTTP metrics, tool invocation counters, latency histograms, server health gauges.

Architecture

┌───────────────────────────────────────────────────────────┐

│ AI Agents (Commander.ai, ProblemSolver.ai, CrewAI...) │

│ Connect via SDK, REST API, or MCP Protocol │

└────────────────────────┬──────────────────────────────────┘

│

┌────────────────────────▼──────────────────────────────────┐

│ MCPFarm Gateway (FastAPI + FastMCP) │

│ ┌──────────┬──────────┬──────────┬────────────────────┐ │

│ │ REST API │ MCP /mcp │ WS /ws │ Prometheus /metrics│ │

│ │ /api/* │ Protocol │ Real-time │ Observability │ │

│ └──────────┴──────────┴──────────┴────────────────────┘ │

│ ┌──────────────────────────────────────────────────────┐ │

│ │ Auth (API Keys) │ Rate Limiting │ Audit Log │ │

│ └──────────────────────────────────────────────────────┘ │

│ ┌──────────────────────────────────────────────────────┐ │

│ │ Tool Registry (Redis + PostgreSQL) │ │

│ │ 34 tools, namespaced, cached, auto-discovered │ │

│ └──────────────────────────────────────────────────────┘ │

└────────────────────────┬──────────────────────────────────┘

│ Docker API

┌────────────────────────▼──────────────────────────────────┐

│ MCP Server Farm (Docker Containers) │

│ ┌────────┐ ┌──────────┐ ┌──────────┐ ┌──────┐ ┌──────┐ │

│ │ Echo │ │Calculator│ │Web Search│ │Data │ │Comms │ │

│ │ 3 tools│ │ 7 tools │ │ 8 tools │ │Sci 11│ │5 tool│ │

│ └────────┘ └──────────┘ └──────────┘ └──────┘ └──────┘ │

└───────────────────────────────────────────────────────────┘Why Centralized MCP Governance

In enterprise AI, the risk isn't the LLM — it's the tools the LLM can call. When agents have direct access to APIs, databases, and external services, every tool call is a potential blast radius. MCPFarm inverts this model: tools live in a governed farm, agents get scoped access through authenticated keys, and every invocation is logged, metered, and auditable.

This is the same principle behind enterprise API gateways (Kong, Apigee) — but purpose-built for the MCP protocol that AI agents speak natively. Rate limit a runaway agent. Revoke a compromised key. Trace which agent called which tool with what arguments. Observe latency spikes before they cascade. The farm is the control plane for agentic risk.

Technology Stack

Gateway

Frontend

Data Layer

Infrastructure

SDK

Observability

Part of the AI Ecosystem

Five specialized platforms designed to compose — MCPFarm is the infrastructure layer they all connect through

Commander.ai

Orchestration & Command

Multi-agent task decomposition and coordination — the brain that delegates work across specialist agents.

View project →WorldMaker.ai

Lifecycle Intelligence

Enterprise digital asset lifecycle analysis — understands what exists, how it connects, and generates code.

View project →ml-pipeline.ai

Autonomous ML

Self-improving ML pipeline — raw data to trained model with LLM-powered Critic review loops.

View project →MCPFarm.ai

Tool Infrastructure

Kubernetes for AI tools — centralized MCP gateway that all agents connect through for governed tool access.

ProblemSolver.ai

Recursive Problem Decomposition

Five-agent pipeline with revision loops that breaks complex problems into structured solutions.

View project →Implementation Highlights

Docker Label Auto-Discovery

mcpfarm.type=mcp-server, and the gateway detects, connects, inventories tools, and begins health monitoring. Remove the container and the tools de-register. Zero-config server lifecycle.Three-Protocol Access

Namespaced Tool Registry

calc_add, search_web, ds_dataframe. This prevents collisions when multiple servers implement similar functionality and makes audit logs unambiguous about which server handled which call.